Multiview 3D reconstruction is one of fundmental tasks in computer vision. One can reconstruct geometric structure of real wold scene by estimating the depth and poses of cameras, which provides infrastructural data for object detection, segmentation and background modeling.

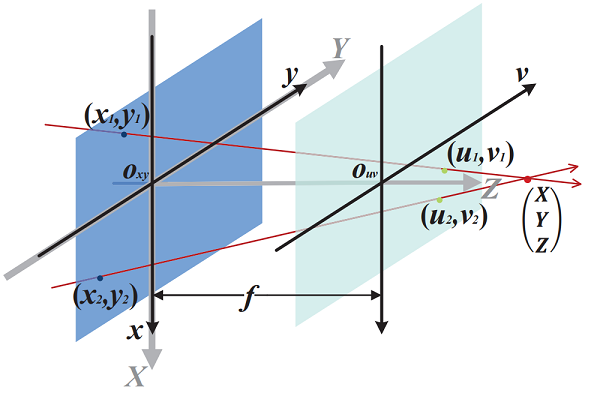

Rectifying Projective Distortion in 4D Light Field

Chunping Zhang, Zhe Ji, Qing Wang

ICIP 2016, Phoenix City, USA, pp.1464-1468, 2016 (09.25-09.28)

Paper |

Code |

BibTeX |

Github

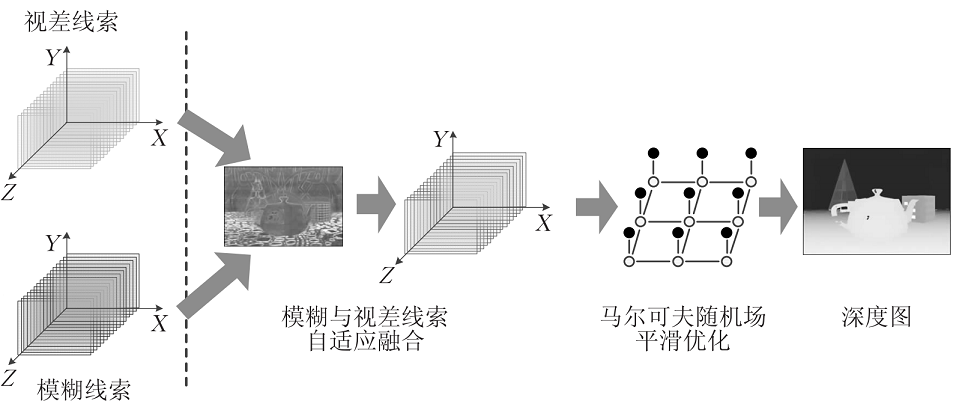

Depth Estimation from Light Field Analysis Based Multiple Cues Fusion

Degang Yang, Zhaolin Xiao, Heng Yang, Qing Wang

计算机学报(Chinese Journal of Computers)

Paper |

Code |

BibTeX |

Github

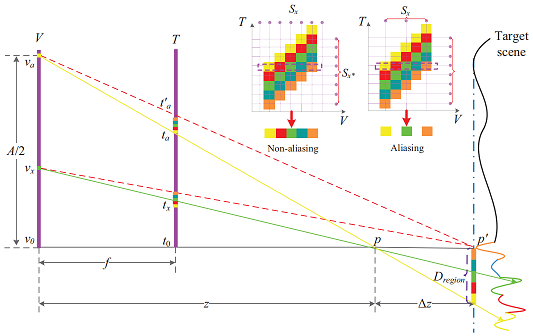

Aliasing Detection and Reduction in Plenoptic Imaging

Zhaolin Xiao, Qing Wang, Guoqing Zhou, Jingyi Yu

CVPR 2014, Columbus, USA, pp. 3326-3333, 2014 (2014.06.23-06.28)

Paper |

Code |

BibTeX |

Github

A Resection Method Based on Enhanced Continuous Taboo Search

Guoqing Zhou, Qing Wang

电子学报(ACTA ELECTRONICA SINICA)

Paper |

Code |

BibTeX |

Github

"The man can be destroyed but not defeated。" - Ernest Miller Hemingway